Kubernetes 1.36 is the upgrade that quietly rewrites your RBAC

The headline features in 1.36 are user namespaces and SELinux. The thing that will actually bite you on Monday is a single locked-on feature gate that turns every nodes/proxy grant in your cluster into an audit finding.

If you operate a production Kubernetes cluster, the most consequential thing in v1.36 is not a new feature. It is the feature gate that can no longer be turned off, and what it does to every monitoring agent you have running today.

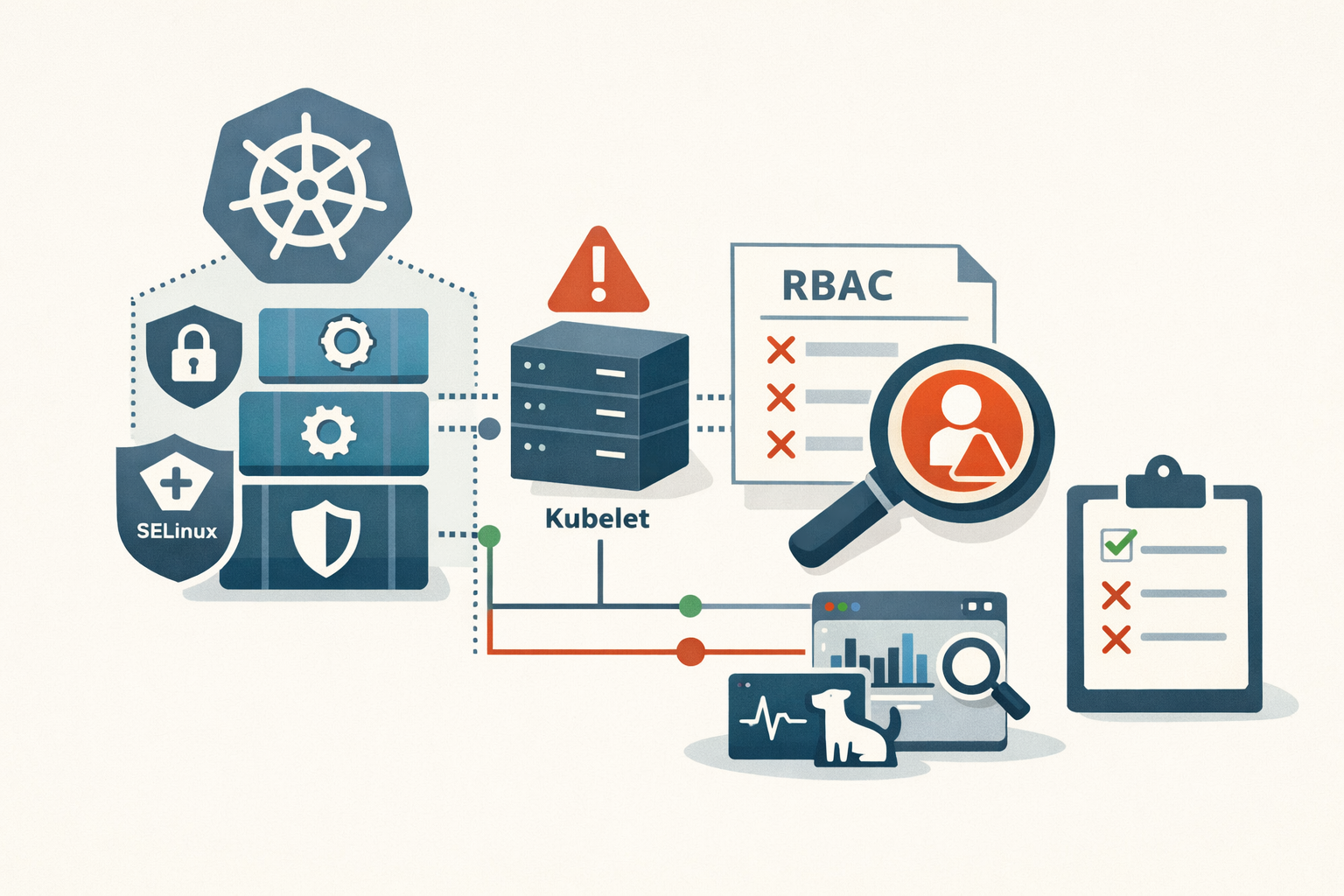

Kubernetes 1.36 (“Haru”) shipped April 22, 2026 with 18 stable graduations. The headlines went to user namespaces and SELinux mount-option relabeling, both genuinely good. The thing that will actually generate tickets is fine-grained kubelet authorization going GA under KEP-2862, with the KubeletFineGrainedAuthz feature gate now locked to enabled. You cannot disable it. And if your Prometheus node-exporter, Datadog agent, or any in-house scraper is running with the default nodes/proxy ClusterRole grant, you now have an audit finding in your cluster that did not exist last week.

This post is about what to test before you upgrade, not what the release notes say. If you want a feature list, the release blog is fine. If you want to know what is going to surprise you on the upgrade path, read on.

The kubelet authorization change is the real story

Before this release, granting a monitoring agent the ability to scrape kubelet metrics required nodes/proxy. That permission is effectively node-level superuser. A WebSocket GET against /exec through nodes/proxy could run arbitrary commands in any container on the node. It was the wrong shape of permission for “read /metrics,” and it has been the wrong shape for years.

The new model maps specific kubelet HTTP endpoints to dedicated subresources:

/stats/*becomesnodes/stats/metrics/*becomesnodes/metrics/logs/*becomesnodes/log/podsand/runningPods/becomenodes/pods/healthzand/configzget their ownnodes/healthzandnodes/configz

The authorization flow still falls back to nodes/proxy if the fine-grained check fails, so nothing breaks the moment you upgrade. Your monitoring keeps working. What changes is that any ClusterRole granting nodes/proxy is now a finding any decent security review will flag, and the cluster admin who left it in place is on the hook for it. The grace period is whatever your auditor’s patience looks like.

The vendors that ship Helm charts with nodes/proxy in their default values as of early 2026: Prometheus node-exporter, kube-state-metrics, Datadog Agent, Dynatrace OneAgent, Elastic Agent. Some have shipped updated charts. Some have not. Check each one before you upgrade, not after.

SELinux relabeling: a real win, with one specific caveat

The second change worth paying attention to is SELinux mount-option relabeling going GA. If you run RHEL, Rocky, or AlmaLinux nodes with SELinux enforcing (you should), Kubernetes used to relabel PersistentVolume contents by walking every file and calling setxattr. On a volume with a million files, that produced pod startup delays measured in minutes. The new behavior passes the SELinux context as a kernel mount option, which the kernel applies atomically at mount time. O(1) cost. Volume size stops mattering.

This is a straightforward improvement and you do not have to do anything to opt in. The caveat is your CSI driver. The GA behavior depends on the CSI driver forwarding mount options through to the kernel. Drivers from storage vendors like NetApp and Pure Storage have not all been confirmed to handle this correctly in their current shipping versions. If you rely on third-party CSI plugins, the time to test this is in staging, with a real PVC, against the actual driver version you run in prod. Do not assume the latency improvement materializes just because you’re on 1.36.

What got removed, in order of how badly it hurts

Three removals will actually break workloads on upgrade. Not warnings. Outright failures.

IPVS mode in kube-proxy is gone. It was deprecated in 1.35 and is now fully removed. If your kube-proxy ConfigMap says mode: ipvs, kube-proxy will fail to start after the upgrade. The fix is to switch to iptables or nftables before you upgrade. The performance gap that originally justified IPVS has narrowed, but if you run 10,000+ Services, validate your conntrack table sizing after the cutover.

gitRepo inline volumes are disabled with no re-enable path. The justification is supply chain risk and it is defensible. The migration is an init container with a git-sync image populating an emptyDir. This is mostly a small-shop problem because gitRepo never made sense at scale, but if you have it, your pods will not start.

The legacy AppArmor annotation is gone. container.apparmor.security.beta.kubernetes.io/<container-name> has been deprecated since 1.30 in favor of spec.securityContext.appArmorProfile. Any Pod using the annotation will fail admission. Helm chart maintainers should have addressed this; in-house manifests need a scan.

There is also a quieter regression worth flagging: the metric volume_operation_total_errors was renamed to volume_operation_errors_total for Prometheus naming compliance. Any alert keyed on the old name silently stops firing. You will not notice until a storage incident goes undetected, which is exactly the kind of thing that turns a small problem into a postmortem.

Managed clusters: do not assume you can upgrade yet

If you run EKS, you cannot run 1.36 today. As of 2026-05-11, the current EKS standard-support ceiling is 1.35, released January 27, 2026. AWS has not published an EKS 1.36 date. Their historical pattern is a 5 to 10 week lag behind upstream, which puts a plausible window in late June or July 2026, but AWS has not confirmed this. Watch the EKS version lifecycle page. If you are on EKS 1.35, you are not under version pressure; standard support runs through March 27, 2027.

AKS has 1.36 on the calendar: preview in May 2026, GA in June 2026. AKS LTS extends to June 2028. You can test in preview clusters this month.

GKE moved fastest. 1.36 hit the Rapid channel on April 28, 2026. Regular channel typically follows by 4 to 6 weeks. Stable channel is approximately August 2026, which is the channel most production workloads should track. If you self-manage on kubeadm, Talos, or Rancher, you can run 1.36 today.

The point is to time your upgrade reading to your platform, not to the upstream release blog. EKS shops have months to prepare. GKE Rapid users have weeks of test runway before Stable promotes.

One test to run this week, regardless of timing

Whatever your platform timing looks like, there is one scan worth doing now, on the live cluster you have today:

kubectl get clusterroles,roles -A -o json | \

jq '.items[] | select(.rules[]? | .resources[]? == "nodes/proxy") | .metadata.name'That gives you the list of every ClusterRole and Role in your cluster currently granting nodes/proxy. For each one, figure out what the agent actually needs (metrics, logs, stats, pod list) and draft the fine-grained replacement. Test in staging before you change anything in prod. The 1.36 upgrade does not break these grants, but it makes them visible in a way they have not been before, and every monitoring vendor on the list above is going to ship an updated chart eventually. The shops that draft the replacement now have a one-line upgrade later. The shops that wait will be doing it under pressure.

The digest watches the kubelet authz behavior, the CSI driver fallout, and the EKS release date for you. When any of those moves, you will see it the next morning.

Sources

- Kubernetes v1.36 Release Blog

- KEP-2862: Fine-Grained Kubelet API Authorization

- KEP-127: User Namespaces

- Kubernetes 1.36 New Security Features (Sysdig)

- AKS Supported Kubernetes Versions

- AWS EKS Kubernetes Version Lifecycle

- GKE Release Schedule

- Kubernetes Deprecated API Migration Guide

- kube-no-trouble (kubent)

Share

Related field notes

One email, every weekday morning.

You're in. Check your inbox.